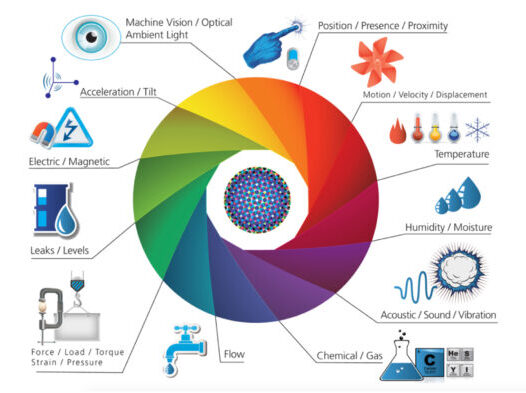

Networked sensors and sensor data fusion are driving new Smart System innovations enabling a whole new generation of applications that are self-sensing, self-controlling, self-optimizing and “self-aware”—automatically, without human intervention. As networks continue to invade the “physical” world, solution designers are seeing the new values that come from the growing interactions between sensors, machines, systems and people.

THE AGE OF EMBEDDED SENSORS (THE INTERNET OF THINGS 2.0)

The world is changing exponentially fast. One indication of the speed is the fact that we’re drowning in unprocessed information. We’re creating data at twice the rate we’re deploying traditional bandwidth to carry it, but almost none of the data created to date has been analyzed. More than half the data created by physical or operational systems loses any value that could be derived through analysis in less than a single second.

Yet according to the National Science Foundation, there will soon be trillions of sensors on the earth. And forecasters predict that in just a few more years there will be more processing power in smart phones than in all the servers and storage devices in data centers on the earth today. Ready or not, we’re rushing into the future of truly distributed systems and intelligence.

We Are Giving Our World a Digital Nervous System

source: Harbor Research

Revolutions always begin by sensing small things and drawing inferences. For example, there is great value in knowing how people use “white goods” like home appliances. If you embedded a microprocessor in the plastic of an electrical outlet, you’d have true local processing as opposed to processing in a remote cloud. From there, you could infer almost anything by sensing the electrical current “signature” and its usage profile—not just energy used, and whether a washing machine’s motor is about to fail, but the fact that the consumer just washed a load of whites versus a load of colored clothes. If you had an inkjet printer plugged into that same outlet, you could know whether the consumer was printing colored pages versus black-and-white.

Analysis of data from a “sweat patch” for measuring human perspiration can reveal the emotional state of an athlete wearing it, as well as the level of physical stress she’s under. If a worker is wearing the patch, you can see if they’re being exposed to the many dangerous substances that exist in factories, farms, and other workplaces.

Weather is another complex phenomenon that can be greatly understood by correlating data values collected from simple pressure, temperature and moisture sensors with additional spatial and temporal parameters that place the data into a richer context. I could be running a fleet based on weather forecasts, but if I add the data from sensor packs mounted directly on my vehicles, I’m less likely to be impacted by unexpected weather conditions. The closer that systems are to real-time, the more efficient and cost effective they can be. All such data, with its related context, has extraordinary value to everyone.

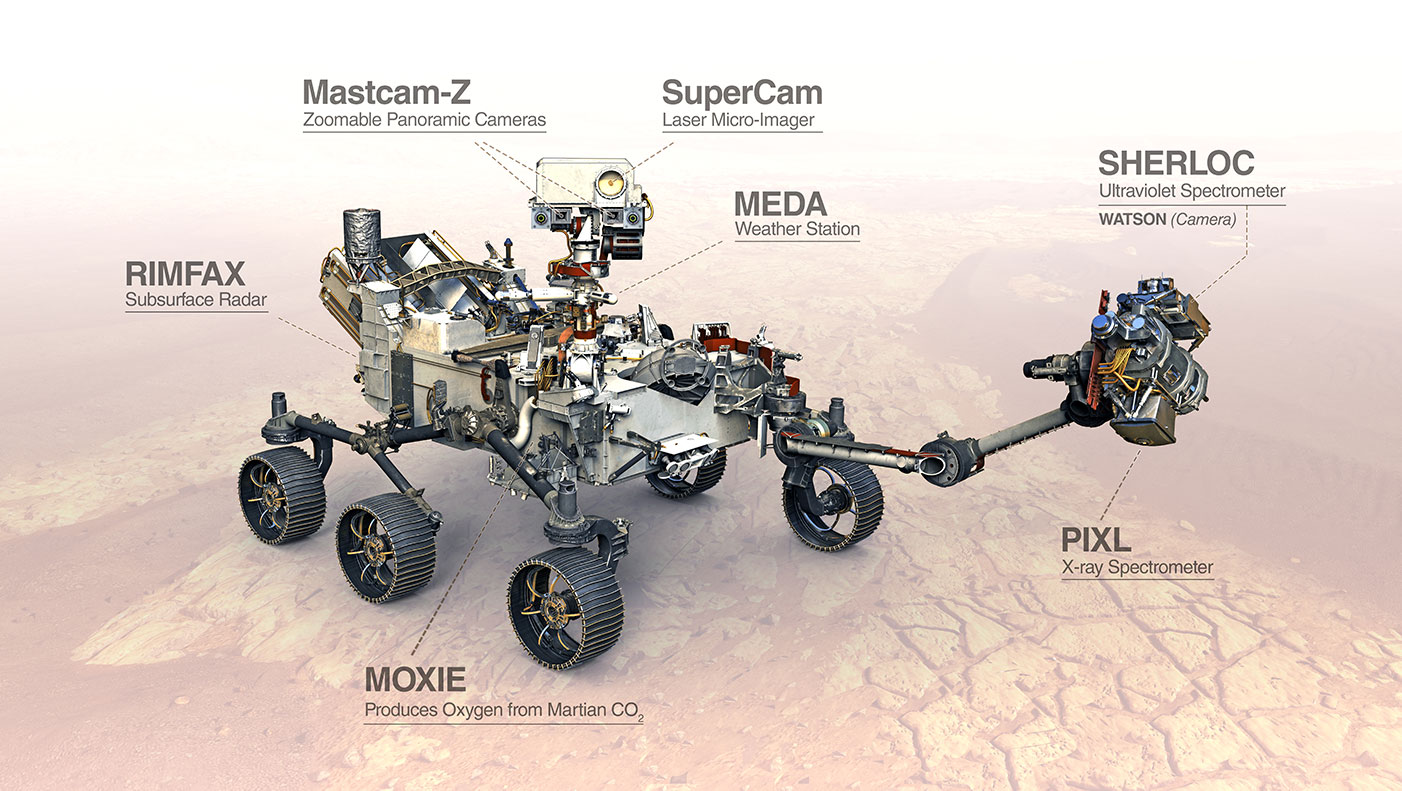

Perseverance Mars Rover Instruments

source: Harbor Research

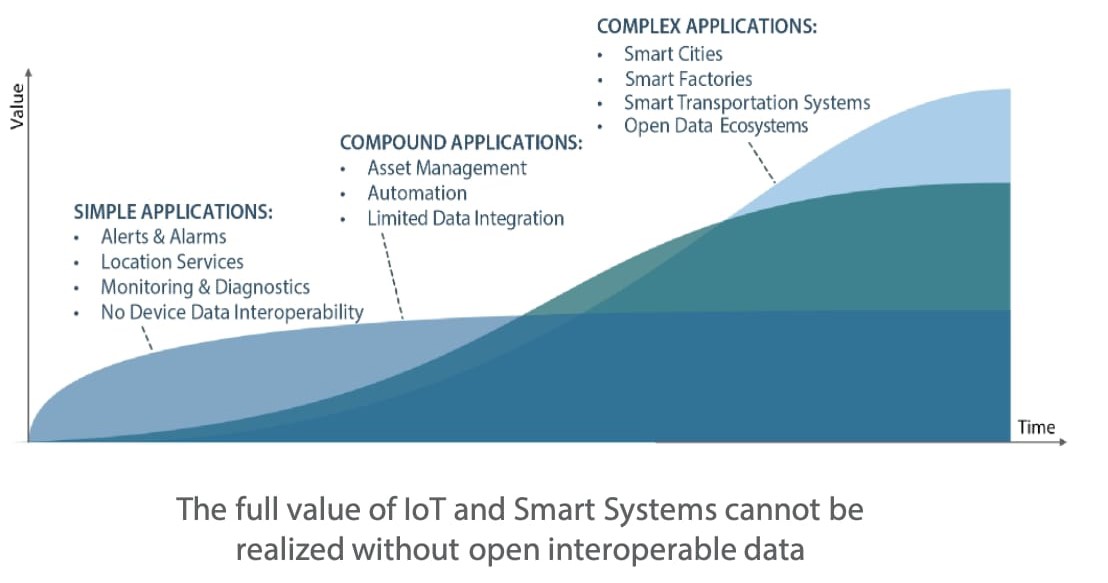

MACHINE-TO-MACHINE INTERACTIONS HELP PAVE THE PATH TO COMPLEX APPLICATIONS

As networks have invaded the “physical” world, system and solution designers are seeing the new values that come from the growing interactions between sensors, machines, systems and people. Electronic, mechanical and other related systems that used to have unique physical interfaces and components are now becoming digital and standardized.

The convergence of collaborative systems and machine communications will enable entirely new modes of services delivery and customer interactions, and the implications are enormous. No product development organization will be able to ignore these forces, nor will their suppliers. Product and service design will increasingly be influenced by the use of common components and subsystems. Vertically defined, stand-alone products and application markets will become part of a larger “horizontal” set of standards for hardware, software and communications.

The Evolution from Simple to Complex Applications

source: Harbor Research

Further, efficient support of products and equipment is only the first benefit of this trend. To conserve precious resources on this tiny planet, the world desperately needs better sensing, and soon. But it’s not happening because IT understands the data that runs the corporation, but not the gargantuan accumulation of tiny data emanated by the systems that run the world. All those systems interact, which creates context, which adds to the value. It’s not that the IT guys are stopping the world from innovating, it’s that they have no direct interest in integrating real-time inputs.

All of these trends lead us to the simple question: How well-prepared are manufacturers for the advent of Smart Systems and services, sometimes called the Internet of Things? We may think we know how to design Smart Systems, but many companies are finding this to be a serious challenge. For all the talk about silicon-based “intelligence” permeating every aspect our lives, we still live in a brutally dumb world.

CONNECTIVITY AT THE EDGE POWERS EMBEDDED COMPUTING

“The edge” is actually part of a much larger distributed systems story that involves collecting and feeding data into context-sensitive computation to make things happen in the real world in real-time. This matters because 85-90% of the data now being generated by billions—and soon trillions—of connected devices will lose most of its value if it is not processed seamlessly in real-time.

The laws of physics (specifically, the speed of light) tell us that we cannot haul all that data across the network to the cloud via full-bandwidth transfer, and then send the computed results back to the real world in the same way, all during the millisecond or microsecond intervals when that time-sensitive data is most relevant. That’s one clear argument for processing this data close to where it’s generated. Furthermore, we now have many emerging applications—extended reality, autonomous robots and vehicles, precision agriculture, and the transport of perishable pharmaceuticals, to state only the most obvious—that absolutely demand this kind of distributed edge computing. They won’t work otherwise.

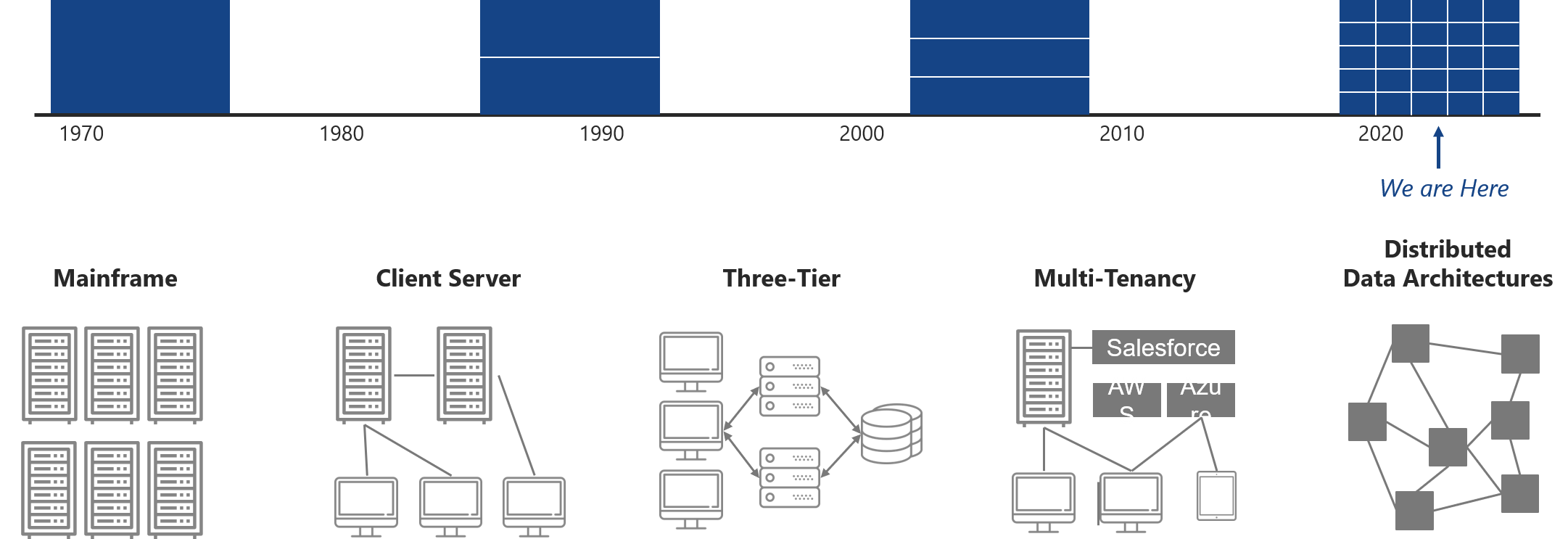

The History of Edge Technology

source: Harbor Research

THE EVOLUTION OF SMART SYSTEMS

As distributed systems evolve, the sensor and actuator devices will all become smart themselves and the connectivity between them (devices, for the most part, that have never been connected) will become more and more complex. As the numbers of smart devices grow, the existing client-server hierarchy and all of these “edge” or “middle world boxes” acting as gateways, hubs and interfaces will quickly start to blur. In this future, the need for any kind of client-server architecture will become superfluous; hierarchical models are numbered.

Now, imagine a future Smart Systems world where sensors and devices that were once connected by twisted pair, current loops or were hardwired, become networked with all devices integrated onto one IP-based network (wired or wireless). In this new world, the “middle world boxes” don’t need traditional input/output (I/O) hardware or interfaces. They begin to look just like network computers running applications designed to interact with peer devices and carry out functions with their “herd” or “clusters” of smart sensors and devices.

We can readily imagine an application environment where there may be several “networked processors” (some embedded into equipment and some acting autonomously on a given machine). These networked processers will run applications, sharing their sensors and actuators, some even ‘sharing’ a whole herd – a smart building, for example, where the processor in an occupancy sensor is used to turn the lights on, change the heating or cooling profile or alert security.

In this evolving architecture, the network essentially flattens until the end-point devices are merely peers and a variety of applications reside on one or more networked application processors. For all intents and purposes, this will look like today’s router/modems, industrial PCs or small “headless” high-availability distributed servers, but will be increasingly more powerful and able to host applications due to embedded functions. In truth, if we are to achieve truly distributed computing, we must enable the actual devices and equipment on a truly embedded level such as on an embedded microcontroller within a device or machine.

We believe it will resemble something closer to an organic system, with an architecture that matches the structure of the physical world. All of the sensors and actuators will become smart themselves, and the connectivity between devices will become increasingly complex. As the numbers of smart devices grow, the existing client-server hierarchy — devices, gateways, hubs, etc. — will quickly start to blur. In this future, the need for client-server architecture will become superfluous, and so the days of hierarchical computing models are numbered.

Value-added applications will spring to life in environments where the right resources (data) find the right nutrients (software applications) that deliver financial value. In reality, these applications will occur at all levels of the architecture, from the device to the cloud. Exactly where they originate, and to what extent the data remains there or is later post-processed elsewhere, will be dictated on the fly by the nature of the data and the application requirements moment-to-moment. Acceptance of this reality is essential to the effective design of software for data management, analytics and collaborative systems.

WHAT THE FUTURE REQUIRES

What are the core enabling technologies required to overcome these hurdles?

- Higher performance, higher quality and more reliable mission critical wireless networks that enable new smarter sensors and sensor data fusion tools

- More “democratized” distributed data and information architecture standards to inform data sharing and data fusion for analytics and machine learning

- Easier and less costly data management, transformation and analytics application development tools

- A new generation of integration and equipment management platforms that enable free flowing data discovery, data aggregation, integration and fusion and collaborative application development

While all of the factors listed above will contribute to OEM and end customer adoption of new Smart Systems and IoT technologies, our analysis also points to several broader market development challenges to realize the full value of new enabling technology:

- Challenges in OEMs around adopting new business, revenue and operating models

- Complex services delivery ecosystems that require new and different relationships

- Anticipation of smart services and systems innovation, as well as new growth venture modes not widely adopted today

- Fragmented digital and IoT vendor landscape, in particular the lack of understanding of how these new more “distributed” and “participatory” systems will work on the part of the IT and telecom technology development community

- Requirements for more vertically-focused solutions developed from “horizontal” enablers

The potential scale of the Smart Systems and IoT opportunities for OEMs is enormous, but utterly dependent on new technology innovations. ◆

Sign Up for Harbor Insights

Enablement & Connectivity Insights

Growth Strategy

Harbor Research has been tracking Embedded Technologies since their inception. Download our latest research and subscribe to quarterly tracking updates from senior analysts here.

Download our Enablement and Embedded Technologies Overview and preview of our Enablement and Embedded Technologies Market Report by filling out the form below.