THE FUTURE OF MACHINE INTELLIGENCE

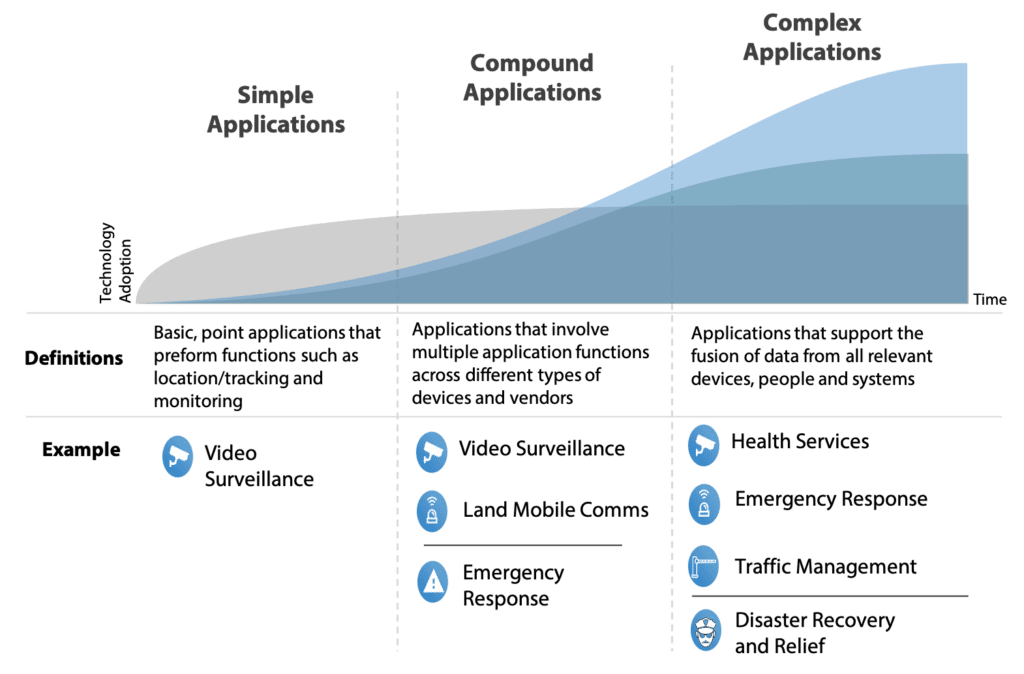

In the Smart Systems and IoT arena today, most networked machine applications are limited to simple remote monitoring and maintenance services, including alerts, alarms and remote diagnostics, as well as tracking and location services. This is due to several factors including technical complexities, business model challenges and a lack of significant embedded intelligence in machines. Existing technology has proven cumbersome and costly to apply, with many conflicting protocols and incomplete component-based solutions. The challenges of gathering machine data and integrating diverse data types have been big adoption hurdles for customers wanting to analyze the data from machines and systems.

Return from simple applications, while valuable, is limited to the manufacturer’s service delivery efficiency. Contrary to what current market offerings depict, however, the value of connectivity does not have to end with simple applications focused on a single class of device. Moving from “simple” to “compound” applications involves multiple collaborating machines and systems with significant interactions between and among devices, systems and people. No longer is the focus solely on the machine builder’s ability to deliver support for their product efficiently. Rather, value is brought to the customer through business process automation and optimization.

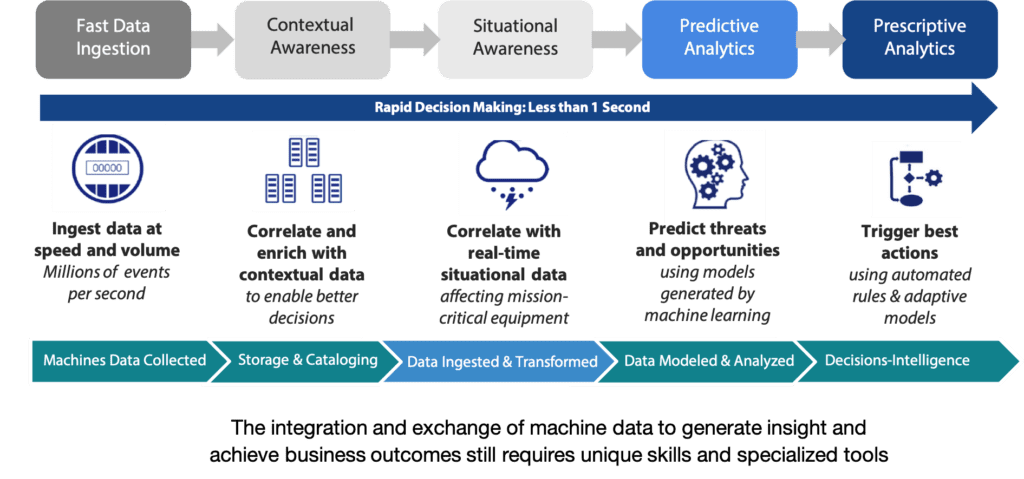

As technologies mature, particularly embedded computing and software tools, machines will continue to evolve to much higher levels of intelligence. As machines become more and more complex, so too will the challenge of extracting intelligence from the machine’s data. Because more advanced intelligent machines produce a variety of more complex “semi-structured” data in a relatively predictable manner, it is an ideal “staging” area for designing, building and deploying a new generation of advanced data transformation, management and analytics tools.

Analyzing why an asset has failed requires investigation of the patterns and hidden signs within machine data. The bottom line is there’s a huge difference between the world of asset monitoring, which is driven by sensor and simple log data, and the world of advanced analytics. This difference is dictated by data sources: sensor and simple log data can provide alerts that something has gone wrong, but only complex machine log data can be used to truly uncover and address the root cause of the failure. Furthermore, complex machine log data provides a much richer context than sensor data. For example, sensors cannot provide information about what applications within an asset’s operating system are being underutilized, but machine data can be used to understand these sorts of usage patterns and suggest user operational improvements.

Advanced forms of machine data will evolve beyond simple sensor and simple log data and will become far more robust. This opens up the opportunities for many diverse and valuable applications. These compound applications will involve more complex machines (such as medical imaging machines) as well as significant interactions between and among many simple and complex machines and data sets (combining, for example, data from medical imaging, diagnostic monitoring and patient records) creating new collaborative business model opportunities that have the potential to drive much greater value for the customer.

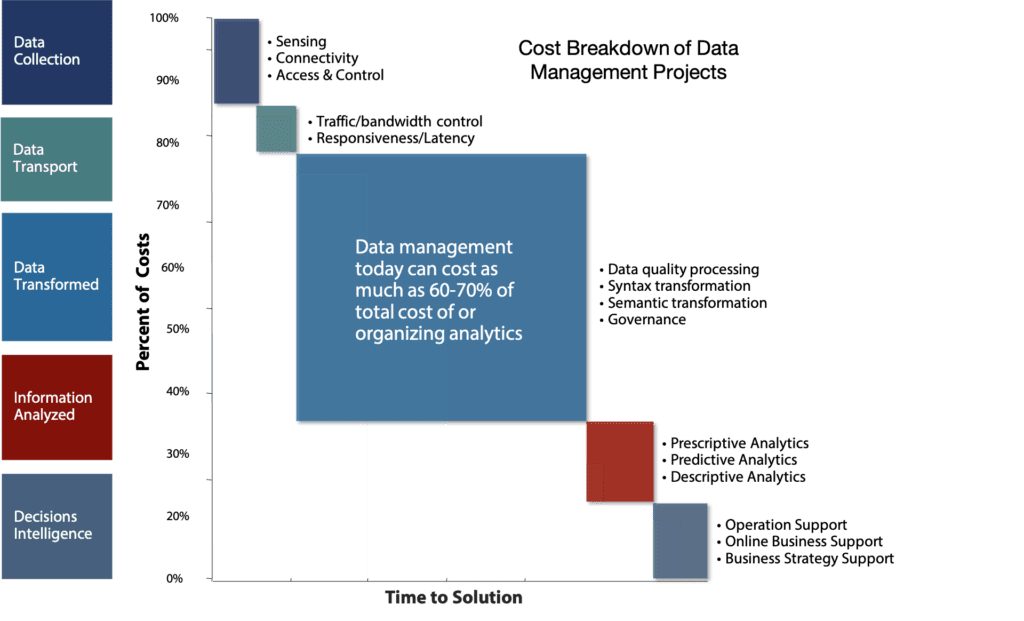

A major driver of the need for new data management tools is the diversity of data types users want to analyze. Because machine and sensor data cover a broad range of data types and structures—in diverse formats, often analog and high-velocity—there are major challenges that traditional data transformation and orchestration tools and techniques do not handle well.