“The edge” is actually part of a much larger distributed systems story about making things happen in the real world in a time-synchronized way. Why is this important? As niolabs founder Doug Standley tells us, 85-90% of the data now being generated by billions—and soon trillions—of connected devices loses most of its value if it is not processed seamlessly in real-time.

And then there’s privacy.

WHAT IS EDGE COMPUTING AND WHY SHOULD YOU CARE?

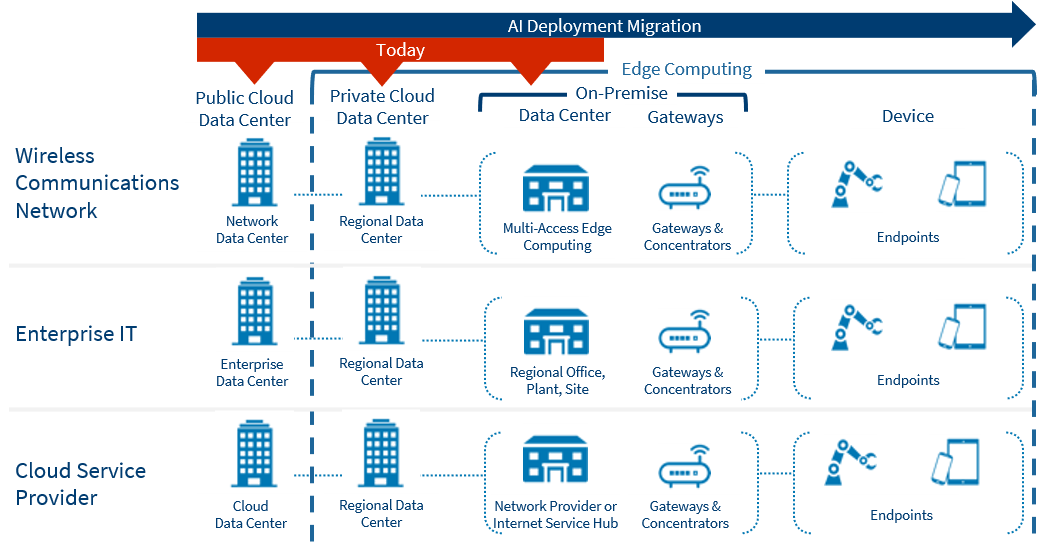

“Edge computing” has always been a slippery term, partly because there’s not a single location that can be called the “network edge.” Any network has many potential edges. What edge computing refers to is the need to perform data processing as close to production as the application in question requires. Not every sensor needs computation ability parked right next to it. Some do and some don’t, and it depends entirely on context.

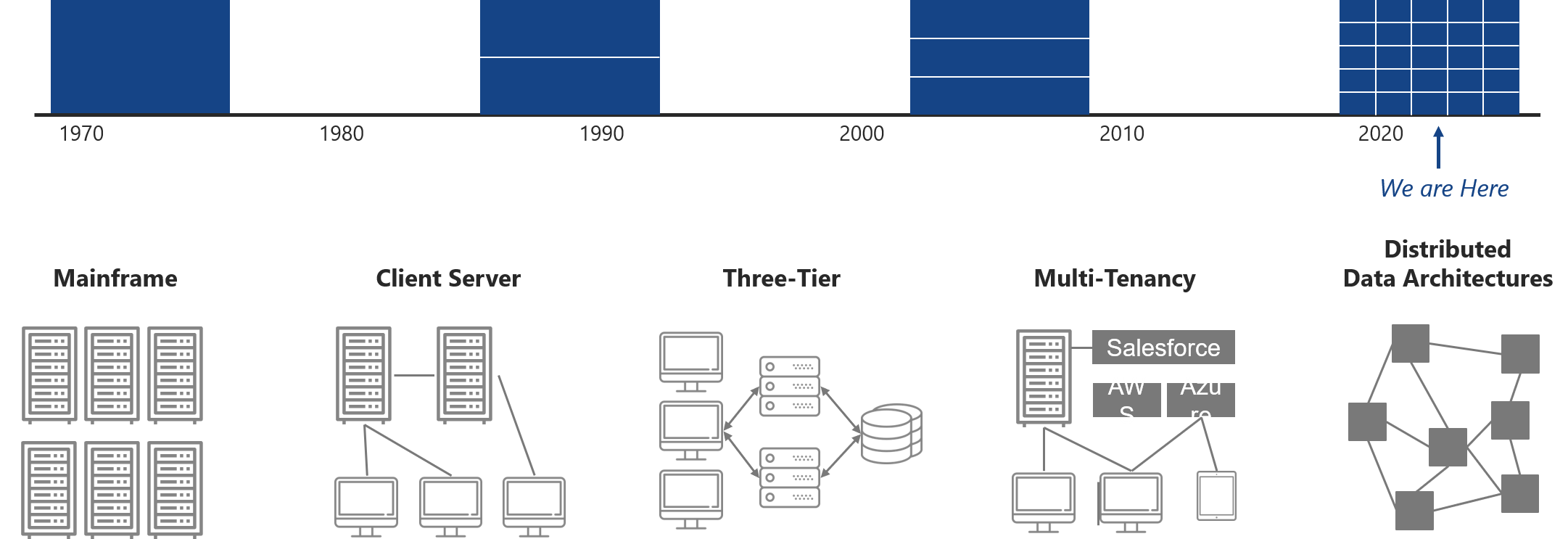

Information Technology (IT) grew up in a batched environment where limited quantities of available data were aggregated and used to try to predict what would happen tomorrow based upon what happened yesterday. Obviously, that’s the opposite of the real-time world we’re moving toward. Seen in that light, edge computing may look like the hot new thing that’s finally going to get us beyond the legacy client-server world that’s supposedly gasping for its last breath, even though corporate cloud computing is an extension of, not a departure from, that legacy.

But “the edge” is actually part of a much larger distributed systems story that involves collecting and feeding data into context-sensitive computation to make things happen in the real world in real-time. Why does this matter? Because 85-90% of the data now being generated by billions—and soon trillions—of connected devices will lose most of its value if it is not processed seamlessly in real-time.

The laws of physics (specifically, the speed of light) tell us that we cannot haul all that data across the network to the cloud via full-bandwidth transfer, and then send the computed results back to the real world in the same way, all during the millisecond or microsecond intervals when that time-sensitive data is most relevant. That’s one clear argument for processing this data close to where it’s generated. Furthermore, we now have many emerging applications—extended reality, autonomous robots and vehicles, precision agriculture, and the transport of perishable pharmaceuticals, to state only the most obvious—that absolutely demand this kind of distributed edge computing. They won’t work otherwise.

History of Edge Technology

PRIVACY AND SECURITY

But the mere movement of data is not the only issue at play. On-the-fly contextualization of data, including the establishment of privacy rights and filters, is now a large social concern in its own right. Thanks to the behavior of certain monopoly tech companies, we have reached the point where privacy, particularly user-configurable privacy, now matters deeply—all the way up to the right of individuals to obfuscate certain kinds of personal data. The days of “trust the network and cloud providers to be good stewards of your information” are clearly over.

We need to move data sovereignty back to the user, whether that means the individual or the enterprise. But so far, the owners of the big pipes and the big data-centers have not been willing to transfer that right to individuals. Very soon we’ll see regulatory pressure to do this, not just from the UK but unilaterally. And that can’t be done if the data, in flight, is moved across the entire network to be processed and stored by someone else. Those data rights have to move as close to the user’s content creation as they can get, and edge computing is the only way to do that.

In the near future, the user experience is going to change radically. Users will find themselves controlling much of their own online experience by defining self-directed advertising and other forms of personalization that will be accomplished on the edge with “in-flight” content, contextualized in microseconds.

The data behind this experience need to be as flexibly deployed as possible within that context setting—not just available, but available to be easily fused or combined in ways that the world today is just not organized to offer in a liquid, responsive way. For this reason, the very complexity of the data becomes the biggest inhibitor of much of edge computing’s potential value. Here too, changing how we think about data-complexity and data-processing re-tells the story significantly.

If you put ten IT professionals in a room today, you’d probably get fifteen definitions of the edge—most of which would find their origin in “the network edge” rather than in anything to do with “apps.” And yet apps are where data and networks interface with human beings. For this reason, we at Harbor are hopeful that in the near future the sock will be turned inside out, and the definition of the edge will expand from beyond the network edge to the “computational edge.”

AI is Shifting to the Edge

A KILLER APP THAT ANYONE CAN UNDERSTAND

Many of edge computing’s “killer apps” would strike many ordinary citizens as tediously arcane. But what if we were talking about something as quotidian as laundry? Doug Standley, the founder of niolabs, told us about an edge application that his firm deployed for a consumer products company that wanted insight into how consumers used their laundry facilities. In particular, they were interested in the impact of the COVID pandemic on the buyer population.

Niolabs installed its test edge processor in 1,000 random, statistically relevant laundry rooms, and the results greatly increased their understanding of what the pandemic’s “stay at home” orders meant to the consumer’s conception of laundry products and how they went about purchasing them. Niolab’s client company was able to adjust its forecasts to this new data-stream, modify its manufacturing orders, and as a result grocery stores never ran out of their products. (Imagine if toilet paper manufacturers had had an equivalent stream of high-resolution data!)

During this edge-processing project, niolabs also picked up on a packaging problem: A complementary product for doing laundry was packed in the wrong size packaging. Stores weren’t replenishing that product until it was time to replenish the laundry detergent itself. This was a revelation to the manufacturer, and they quickly fixed the packaging mismatch. As a result, in the first two months of COVID those two laundry products alone realized $145 million of benefits for this consumer products company. To say it again: $145 million of benefits based on the research data from 1,000 homes, without asking consumers to change their behavior one bit. That could not have been done without edge processing.

A final note on getting your laundry done. Consumer packaged goods (CPG) companies, including manufacturers of laundry products, are feeling the confines of their distribution model. Why can’t laundry products become direct-to-consumer? Of course they can. But to do that, you don’t want to guess. You want to know what the consumer is using. Two billion houses in the world have dedicated laundry rooms. Those rooms are the second largest user of water and power in the home. So why do we still have dumb laundry rooms?

Our forecast is that this won’t continue to be the case for long. In the very near future we’re going to see intelligent laundry rooms enabled by processing consumer usage behavior at the edge, right where it occurs, in the consumer’s home.

This essay was drawn from our recent podcast with Doug Standley. Listen to our full conversation with Doug to hear more ideas on the next edge killer app and other news from the future.