In their twenty years of existence, smartphones taught us that “being mobile” and having the internet in our pockets offers enormous benefits for everyone. But the smartphone also showed us that—perhaps more than ever—we’re slaves to our screens. Years after evolving from being chained to a desktop, we still need to liberate ourselves from doing everything on the computer’s terms.

DOING EVERYTHING ON THE MACHINE’S TERMS

Human-computer interfaces have been a story of alienation: The computer exists outside us and everything we do is on the machine’s terms. If we turn our head from its fixed screen, the machine ceases to exist. If its keyboard breaks, disconnects or fails to appear on-screen because of a bug, making ourselves understood is close to hopeless. If it has a voice-based assistant and we try to talk to that to bypass the keyboard, wish us luck.

In software it’s even worse, especially because it doesn’t have to be. How many nested dialog boxes must we travel down to change one little thing in a file? Yes, of course there are shortcuts we could define to do it quickly, but the process of setting those up is so onerous that most users would rather suffer than learn how.

And to make it up to us, the vendors of the most user-friendly devices maintain 24/7/365 phone support, elaborate community web sites, whole archives of instructional videos, and in-person genius bars for hand-holding the poor human customers.

PERVASIVE COMPUTING BECOMES SPATIAL COMPUTING

However, things are about to get much better, and that’s not a joke. The reason is something you’ve heard us talk about before: Mature and well-aligned technologies. The process that gave us functioning computers in the first place has evolved to where computers are about to do what they always promised: Disappear.

We’ve been prophesying pervasive or ubiquitous computing for more than thirty years, and in that time our understanding of the phenomenon has evolved into spatial computing. In the last decade, for example, the success of various unicorn startups—for vehicle and residence sharing, home task services, on-demand food delivery, etc.—has depended upon the digitization of spatial information about the real world. Or take the emerging phenomenon of “digital twins” where one of the main benefits is that their interaction rules, transparency and verifiable history are synced to their physical counterparts in physical space.

The interface to this digitized world has so far been the smartphone, an invention so obvious and rapidly adopted that it’s difficult to remember when it didn’t exist. But that was a mere fifteen years ago. In that time, the “mobile revolution” taught us the power of carrying the internet around in our pockets.

But it has also revealed that we are still doing things on the computer’s terms. Indeed, we are even more tethered to our devices than we had been before. Fifteen years ago it was space-age to have the web in our hands, but staring at our handheld rectangles across physical space takes our attention off the world we naturally live in.

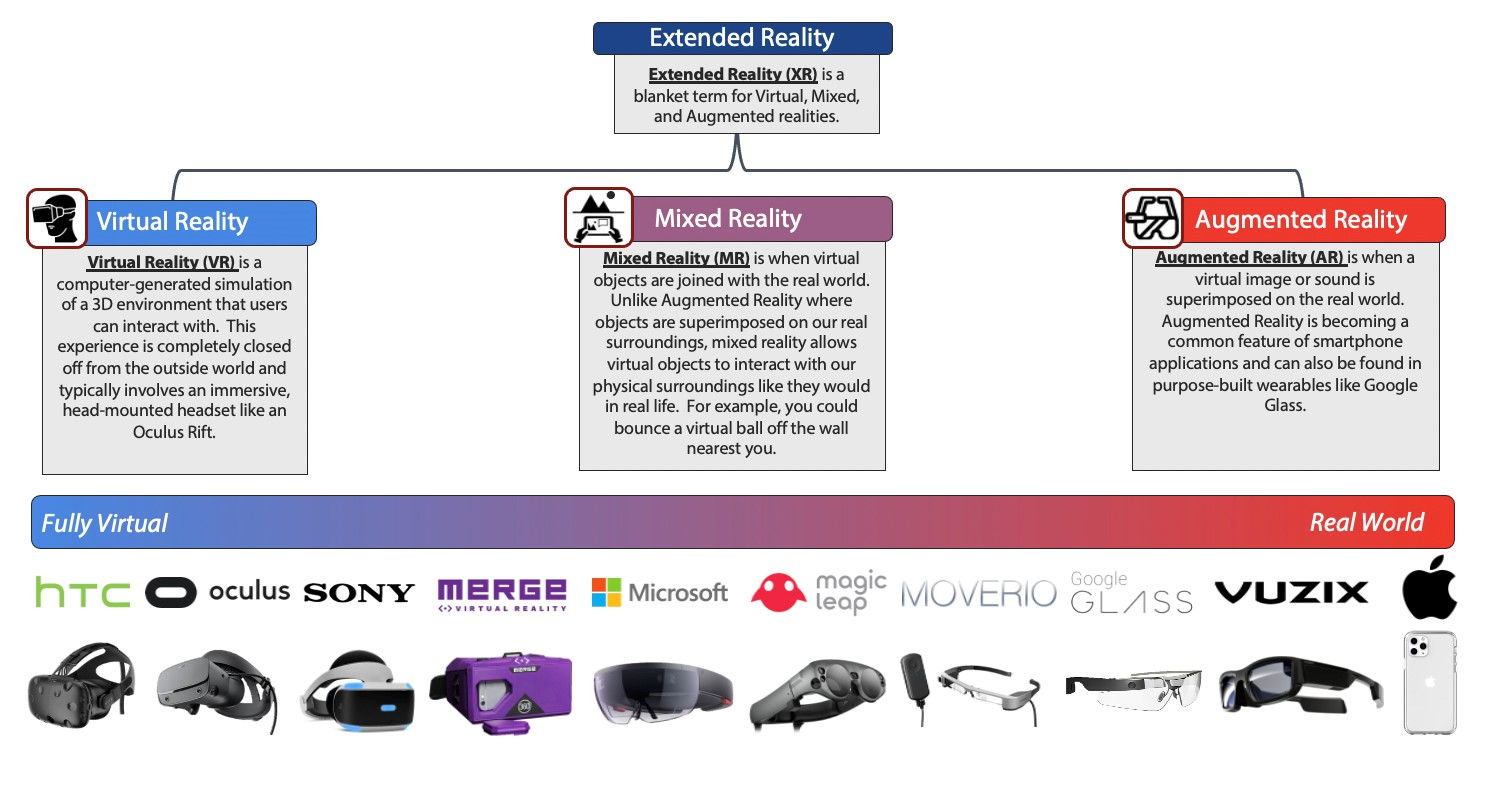

Breaking Down the Extended Reality Universe

THE NEXT HARDWARE PLATFORM WILL BE SMART GLASSES

It’s now painfully clear that smartphones are a transitional technology. Looking down at a map on your phone while crossing a busy city street is a not-so-subtle instance of human beings modifying their natural, biology-based behavior to accommodate a computer. And yet having that map in front of you answers a fundamental human need.

Consider that:

- Humans have binocular vision with depth perception

- 30% of the human brain is dedicated to the visual system

- 70% of human sensory receptors are in the eyes, and

- 50% of the brain is involved in visual processing

This suggests that we need a technology interface that matches our biology—specifically a 3D binocular interface. Obviously, connected eyeglasses rush to mind as the next hardware computing platform—not because they are “sexy” or “more exciting” but because they are biologically determined. Anything else will just be too cripplingly inefficient.

Further, the time has almost come. At the exact same moment that 5G network technology is proliferating, the cost of spatial interfaces in the form of glasses is plummeting as their quality skyrockets. Once again: Mature and well-aligned technologies.

But having a camera taking continuous video of everything you see is a hard sell for the general public. Most people also object—justifiably—to being video-recorded as part of normal life, and the early Google Glasses, with the nickname “Glassholes” for their wearers, didn’t help matters.

And “sexy” is its own kind of hurdle. Most people object to glasses that make them look like they just got off the Starship Enterprise. But getting glasses to look “normal” while containing all the technology of the next computing platform—i.e., processing chips, sensors, cell phone and audio circuitry, high-resolution cameras—has frustrated engineers and designers for at least thirty years. We’re not quite to “normal glasses” yet, but it’s only a matter of time.

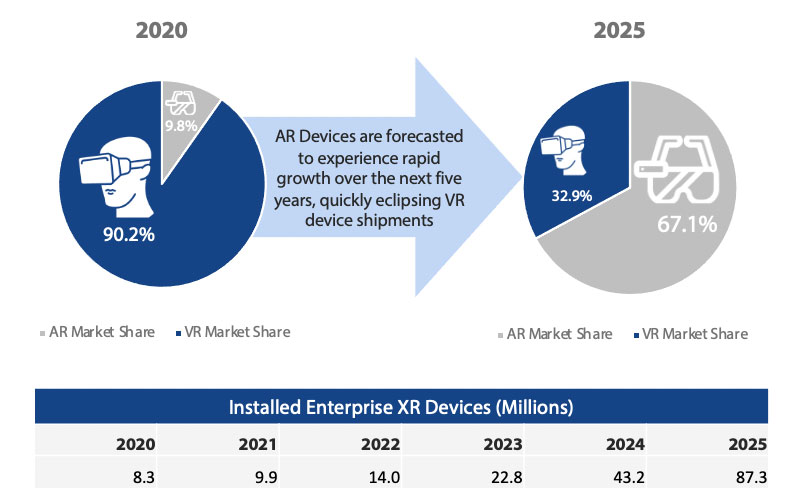

AR will surpass VR with a combined installed base of 87.3M by 2025

OUR BET IS ON AUGMENTED REALITY

These glasses will produce a layer of augmented reality (AR) on top of the real world. AR is already embraced in smartphone apps—from Pokémon Go to city walking tours. But, again, AR in a handheld screen immediately takes you out of the immersive experience.

AR is quite distinct from virtual reality (VR) glasses that deliberately block out the real world to produce the illusion of a fictional world. Even though Facebook’s purchase of Oculus VR demonstrates that “full immersion,” primarily for gameplaying, is a real market, Facebook’s Mark Zuckerberg—who is also pursuing AR glasses aggressively—admits that he doesn’t see a unified set of glasses capable of doing both.

For enterprise use and working in the real world, our bet is on AR’s ability to layer stored and live information as a “heads-up” display on the real world. Killer apps for this capability already exist in many venues. Medical use cases abound, with knee and soon shoulder operations routinely performed by surgeons guided in the alignment of artificial joints and the placement of pins and screws by overlays produced by AR glasses.

The COVID-19 pandemic and the climate crisis have further boosted medical adoption of AR. Doctors who couldn’t travel, or preferred not to add to jet engine pollution, have routinely assisted distant surgeons who shared their “eyes” with their far-flung colleagues and received spoken advice or even diagrams drawn in the “air” in front of them.

The possible applications of AR are literally endless, but other immediate candidates include any work-context where mistakes are costly and time is of the essence: Workers doing car assembly, repair crews in factories and oil wells, warehouse pickers, and insurance agents needing databases of information to calculate damage assessments.

Top Horizontal XR Use Cases

WE MUST LEARN FROM EARLIER MISSTEPS

We have often talked about the merger of atom-based and bit-based worlds. This is now literally occurring as the physical computing hardware layer of the IoT captures and distributes data for compute and storage via blockchain, edge and mesh networks.

Meanwhile, our environments will soon exceed the intelligence capabilities of human beings. Every designed phenomenon—from buildings to manufactured objects—will be populated by billions of self-executing smart contracts, and the built surroundings around us will essentially become sentient.

Quantum computers, operating in a realm where classical physics does not apply, will intensify these developments, enabling us to look at reality as if through a magical microscope, untangling the seeming chaos of traffic patterns or the heretofore indecipherable pulse of global markets.

As we move into this confluence of worlds with the union of spatial, cognitive, and distributed computing, our spatial technologies, supported by a spatial web, cannot be built on the existing frameworks and surveillance capitalism schemes of the present-day web. Those structures are geared toward the storage and analysis of our behavior on someone else’s servers, and among all their other weaknesses they have the potential to be hijacked and abused by malevolent actors both human and algorithmic. Instead of web sites and apps being hacked, our homes, schools, cars, biology and brains will be hacked. That’s a template for true global dystopia.

In developing spatial computing and the spatial web, it is crucial that we adopt ethical rules and boundaries around the use of these systems. In particular we need to prevent technological “lock-in” whereby proprietary technologies become permanently embedded into the infrastructure of global systems. In liberating ourselves from the computer screen, we must also strive to free ourselves from the traditional software business models of centralized power and siloed platforms that will cripple not only innovation but basic human rights. ✦