THE ERA OF FLYING SOLO IS REALLY TRULY OVER

Over the last decade or more, Smart Systems and IoT technology developers have largely focused their core development work and innovations on primarily serving OEMs and related service providers and intermediaries. Digital and IoT technologies are driving many new growth opportunities and efficiencies for OEMs based on new data collection, management and analytics tools that provide a deeper understanding of a connected product or machine’s performance and usage. Because of immediate returns on efficiencies and the new applied values these systems can generate, OEMs have been the dominant adopters of new Smart technologies and, like “Typhoid Mary,” have carried these innovations into end customers where their presence and impacts are expanding like a disease spread pattern.

However, the collection of dull and dreary “solo” solutions that comprise a significant percentage of the Smart Systems today—like equipment health, meter reading, and fleet tracking applications—have not evolved beyond simple applications focused on alerts, alarms and remote diagnostics. The reason the market has been slow to adopt more robust systems is largely due to the technical complexities required to deploy networked systems and the challenges for many suppliers that come with the shift to services-focused business models.

As end customers in factories, hospitals, buildings and more become more familiar with digital and Smart Systems capabilities, they are realizing these technology innovations will push the boundaries of how data from products, systems and equipment can be utilized to manage and optimize processes within their operations which, in turn, has increased pressure on equipment manufacturers and services providers to embrace data management and analytics tools.

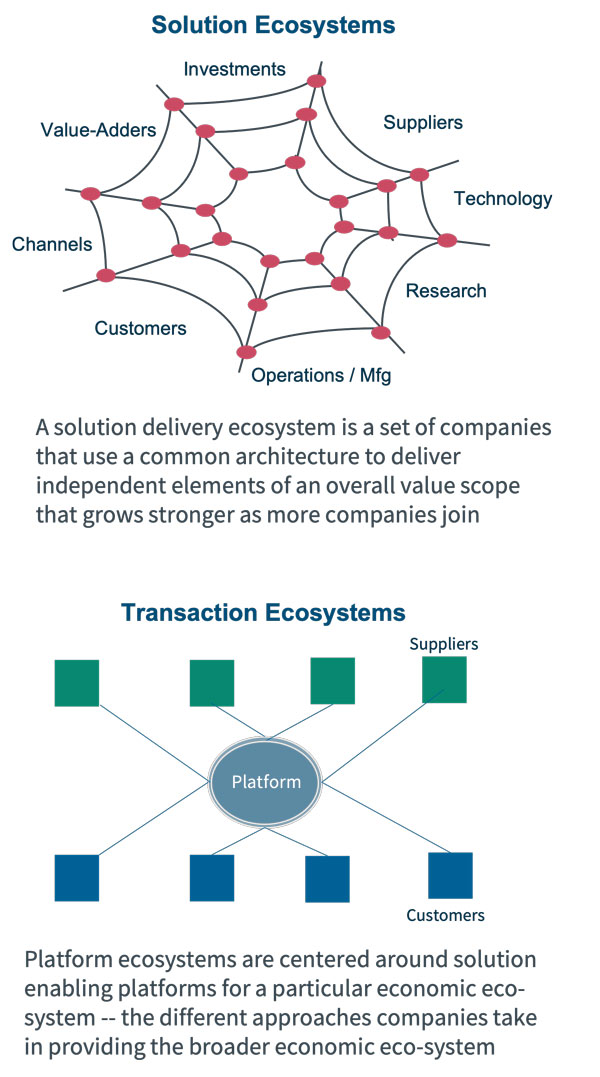

As software tools mature and new hardware technologies increase performance and open up new use cases, applications based on deeper peer-to-peer interactions between sensors, machines, data, systems and people will drive more compound and dynamic value streams. These innovations are powering more complex applications in a variety of industries, from predictive maintenance to optimizing equipment systems and to synchronizing multi-tiered support. However, the challenges of gathering machine data and integrating diverse data types have been big adoption hurdles, particularly in industries where the range of brands and equipment types number in the hundreds.

With that said, for at least twenty years, Harbor Research has been telling our clients that “the era of flying solo is over.” But this is hard advice to accept. Compared to flying in a group, flying solo is easy. You have near-complete control over everything.

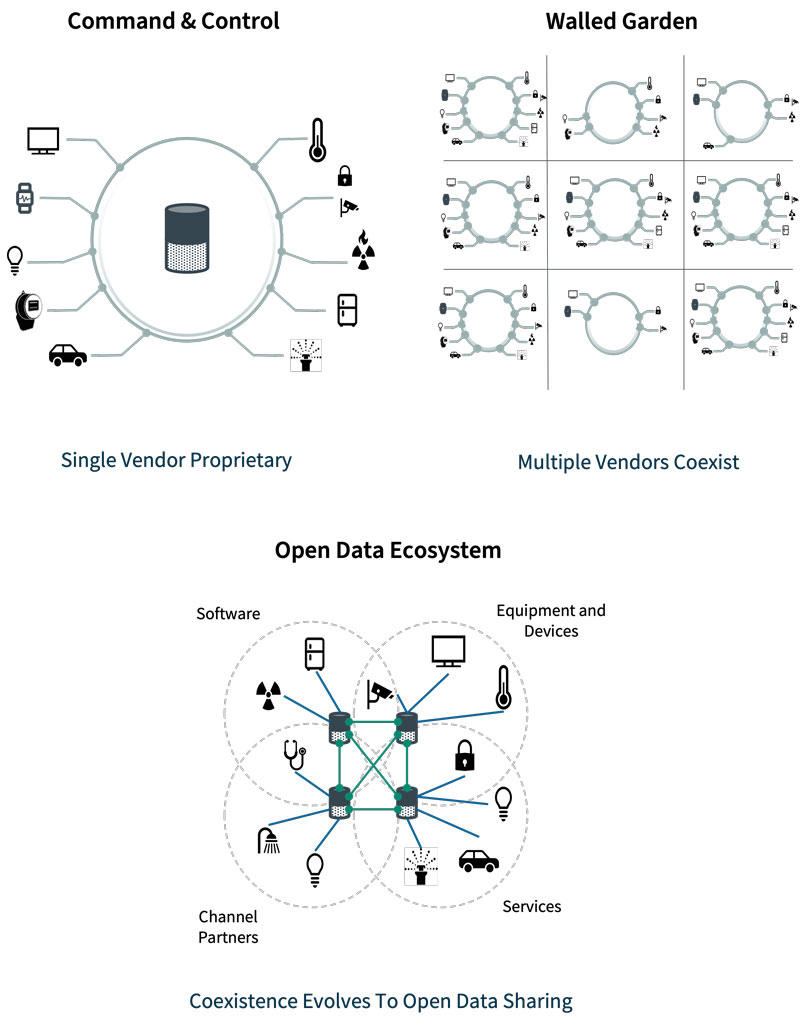

Of course, most businesses insist that they’re not flying solo. They depend upon many relationships; they’re part of complex value chains. Yes, but those excuses miss the point. Their relationships aren’t organic ecosystems. They’re command and control hub-and-spoke arrangements, and the business in question is always the hub.

“You want us to collaborate and share our data,” we often hear from the C-suite. “But our data’s the new path to profitability.” Systems suppliers that have come from a legacy of “solo” solution delivery resist fundamental change. But change they must.

The healthcare sector illustrates the B2B dilemma and the many challenges players face trying to create ecosystems. Today, the average 200+ bed hospital has over 250 brands of equipment and devices which causes the typical hospital patient to interact with over 75 devices per day. If every device and machine has its own embedded intelligence and monitoring scheme, how should healthcare CIOs respond to hundreds of equipment OEMs showing up on their doorstep proclaiming that they have the most superior digital and remote data collection capabilities?

The simple truth is optimization of any system or resource illustrates the value of shared systems and data. Customers want to integrate data from diverse devices, machines and equipment systems to enable new and novel ways to solve operational and business problems. Remote connectivity alone may help the manufacturer of a machine provide more efficient service and support, but it does not allow the end user to leverage very much intelligence across myriad brands, suppliers and diverse systems.